3D系列4.12 使用菲涅尔项混合折射

现在我们已经在水面上添加了一些波浪,下面需要使用折射贴图混合水底的颜色。我们将使用上一章同样的波浪effect,我们已经知道了反射和折射颜色,所以下面只有一个问题:对每个像素,我们需要使用多少颜色?

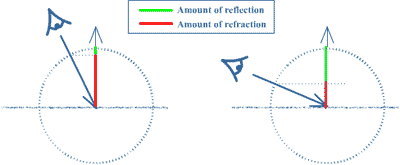

答案如下图所示。水平线表示水面。指向上方的向量是像素的法线向量。另一个向量叫做eyevector,是从相机指向像素的向量。法线向量上的绿色长度表示当前像素的反射量,红色表示折射量。

那么我们如何找到绿色和红色线段的长度?我们需要将eyevector投影到法线向量上,这可以通过点乘做到:当你点乘eyevector和法线后就会获得红色线段的长度。而绿色线段长度等于(1- 红色线段长度)。理论讲完了,让我们开始HLSL代码。如你所见,我们需要相机的位置,所以添加以下代码: float3 xCamPos; 在开始在着色器中进行菲涅尔运算前,首先需要计算水面的折射颜色,这和计算水面反射颜色的方法是一样的:首先需要投影纹理,在纹理上添加一些波浪扰动,并采样折射贴图。要获取投影纹理,我们仍需要像素的2D屏幕坐标,这个坐标是通过创建折射贴图的相机观察的,这个相机就是普通的相机,所以在顶点着色器和输出结构中添加以下代码:

struct WVertexToPixel

{

float4 Position : POSITION;

float4 ReflectionMapSamplingPos : TEXCOORD1;

float2 BumpMapSamplingPos : TEXCOORD2;

float4 RefractionMapSamplingPos : TEXCOORD3;

float4 Position3D : TEXCOORD4;

};

…

Output.RefractionMapSamplingPos = mul(inPos, preWorldViewProjection);

Output.Position3D = mul(inPos, xWorld);

对每个像素,我们还需要它的3D位置用来计算eyevector,所以在顶点着色器中添加最后一行代码。水面的顶点已经定义在绝对世界空间中了,但为了保持通用,我们仍然将位置乘以世界矩阵。

然后,在像素着色器中,首先将上一章获取的反射颜色保存到一个变量中:

float4 reflectiveColor = tex2D(ReflectionSampler, perturbatedTexCoords);

现在要做的和上一章是相同的,但这次是折射贴图:

float2 ProjectedRefrTexCoords;

ProjectedRefrTexCoords.x = PSIn.RefractionMapSamplingPos.x/PSIn.RefractionMapSamplingPos.w/2.0f + 0.5f;

ProjectedRefrTexCoords.y = -PSIn.RefractionMapSamplingPos.y/PSIn.RefractionMapSamplingPos.w/2.0f + 0.5f;

float2 perturbatedRefrTexCoords = ProjectedRefrTexCoords + perturbation;

float4 refractiveColor = tex2D(RefractionSampler, perturbatedRefrTexCoords);

也许你想首先看一下折射贴图。要做到这点,只需将refractiveColor传递到Output.Color并运行代码。

现在你知道了反射和折射颜色,就可以根据菲涅尔项对它们进行混合了。如前面所解释的,要获取菲涅尔项你需要知道eyevector:

float3 eyeVector = normalize(xCamPos - PSIn.Position3D);

通常法线是通过XNA程序传递到顶点着色器中的。在这个简单的例子中,我们让水面的每个像素的法线指向上方:

float3 normalVector = float3(0,1,0);

现在知道了两个向量,我们可以找到菲涅尔项了:

float fresnelTerm = dot(eyeVector, normalVector);

最终获得混合的颜色:

Output.Color = lerp(reflectiveColor, refractiveColor, fresnelTerm);

这个颜色是refractiveColor和reflectiveColor的插值。这就是HLSL代码,在XNA中只需将相机位置传递到DrawWater方法中即可:

effect.Parameters["xCamPos"].SetValue(cameraPosition);

现在运行代码,就可以获得一个具有折射和反射颜色的水面!

最后混合一个dull水面颜色(即让水面看起来“脏”一点)。首先获取混合的颜色combinedColor:

float4 combinedColor = lerp(reflectiveColor, refractiveColor, fresnelTerm);

定义一个dull水面颜色,为蓝灰色:

float4 dullColor = float4(0.3f, 0.3f, 0.5f, 1.0f);

并进行混合!

Output.Color = lerp(combinedColor, dullColor, 0.2f);

这行代码使用20%的 dullColor,80%的combinedColor,将它们混合在一起并输出!下面是程序截图:

当你运行代码时,你会看到一个漂亮的水面。这个水面具有反射、折射、并添加了一点“脏”颜色让它看起来更真实。

我们还可以添加一些更高级的东西。例如,我们假设水面的法线都是朝上的,但波浪上的法线并不是这样的。那么波浪上的光照应该如何呢?光照会被法线方向影响。这会添加在本系列的最后,但下一章让我们给水面的真实度再做一个大的改进:让波浪沿水面运动。

你可以进行以下练习检验学到的知识:

- 尝试更多的dull水面颜色和混合因子。

我没有列出完整的XNA代码,因为添加的只是xCamPos变量。

下面是HLSL代码,红色部分为相对于上一章改变的代码:

//----------------------------------------------------

//-- --

//-- www.riemers.net --

//-- Series 4: Advanced terrain --

//-- Shader code --

//-- --

//----------------------------------------------------

//------- Constants --------

float4x4 xView;

float4x4 xReflectionView;

float4x4 xProjection;

float4x4 xWorld;

float3 xLightDirection;

float xAmbient;

bool xEnableLighting;

float xWaveLength;

float xWaveHeight;

float3 xCamPos;

//------- Texture Samplers --------

Texture xTexture;

sampler TextureSampler = sampler_state { texture = <xTexture> ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = mirror; AddressV = mirror;};Texture xTexture0;

sampler TextureSampler0 = sampler_state { texture = <xTexture0> ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = wrap; AddressV = wrap;};Texture xTexture1;

sampler TextureSampler1 = sampler_state { texture = <xTexture1> ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = wrap; AddressV = wrap;};Texture xTexture2;

sampler TextureSampler2 = sampler_state { texture = <xTexture2> ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = mirror; AddressV = mirror;};Texture xTexture3;

sampler TextureSampler3 = sampler_state { texture = <xTexture3> ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = mirror; AddressV = mirror;};Texture xReflectionMap;

sampler ReflectionSampler = sampler_state { texture = <xReflectionMap> ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = mirror; AddressV = mirror;};Texture xRefractionMap;

sampler RefractionSampler = sampler_state { texture = <xRefractionMap> ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = mirror; AddressV = mirror;};Texture xWaterBumpMap;

sampler WaterBumpMapSampler = sampler_state { texture = <xWaterBumpMap> ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = mirror; AddressV = mirror;};

//------- Technique: Textured --------

struct TVertexToPixel

{

float4 Position : POSITION;

float4 Color : COLOR0;

float LightingFactor: TEXCOORD0;

float2 TextureCoords: TEXCOORD1;

};

struct TPixelToFrame

{

float4 Color : COLOR0;

};

TVertexToPixel TexturedVS( float4 inPos : POSITION, float3 inNormal: NORMAL, float2 inTexCoords: TEXCOORD0)

{

TVertexToPixel Output = (TVertexToPixel)0;

float4x4 preViewProjection = mul (xView, xProjection);

float4x4 preWorldViewProjection = mul (xWorld, preViewProjection);

Output.Position = mul(inPos, preWorldViewProjection);

Output.TextureCoords = inTexCoords;

float3 Normal = normalize(mul(normalize(inNormal), xWorld));

Output.LightingFactor = 1;

if (xEnableLighting)

Output.LightingFactor = saturate(dot(Normal, -xLightDirection));

return Output;

}

TPixelToFrame TexturedPS(TVertexToPixel PSIn)

{

TPixelToFrame Output = (TPixelToFrame)0;

Output.Color = tex2D(TextureSampler, PSIn.TextureCoords);

Output.Color.rgb *= saturate(PSIn.LightingFactor + xAmbient);

return Output;

}

technique Textured_2_0

{

pass Pass0

{

VertexShader = compile vs_2_0 TexturedVS();

PixelShader = compile ps_2_0 TexturedPS();

}

}

technique Textured

{

pass Pass0

{

VertexShader = compile vs_1_1 TexturedVS();

PixelShader = compile ps_1_1 TexturedPS();

}

}

//------- Technique: Multitextured --------

struct MTVertexToPixel

{

float4 Position : POSITION;

float4 Color : COLOR0;

float3 Normal : TEXCOORD0;

float2 TextureCoords : TEXCOORD1;

float4 LightDirection : TEXCOORD2;

float4 TextureWeights : TEXCOORD3;

float Depth : TEXCOORD4;

};

struct MTPixelToFrame

{

float4 Color : COLOR0;

};

MTVertexToPixel MultiTexturedVS( float4 inPos : POSITION, float3 inNormal: NORMAL, float2 inTexCoords: TEXCOORD0, float4 inTexWeights: TEXCOORD1)

{

MTVertexToPixel Output = (MTVertexToPixel)0;

float4x4 preViewProjection = mul (xView, xProjection);

float4x4 preWorldViewProjection = mul (xWorld, preViewProjection);

Output.Position = mul(inPos, preWorldViewProjection);

Output.Normal = mul(normalize(inNormal), xWorld);

Output.TextureCoords = inTexCoords;

Output.LightDirection.xyz = -xLightDirection;

Output.LightDirection.w = 1;

Output.TextureWeights = inTexWeights;

Output.Depth = Output.Position.z/Output.Position.w;

return Output;

}

MTPixelToFrame MultiTexturedPS(MTVertexToPixel PSIn)

{

MTPixelToFrame Output = (MTPixelToFrame)0;

float lightingFactor = 1;

if (xEnableLighting)

lightingFactor = saturate(saturate(dot(PSIn.Normal, PSIn.LightDirection)) + xAmbient);

float blendDistance = 0.99f;

float blendWidth = 0.005f;

float blendFactor = clamp((PSIn.Depth-blendDistance)/blendWidth, 0, 1);

float4 farColor;

farColor = tex2D(TextureSampler0, PSIn.TextureCoords)*PSIn.TextureWeights.x;

farColor += tex2D(TextureSampler1, PSIn.TextureCoords)*PSIn.TextureWeights.y;

farColor += tex2D(TextureSampler2, PSIn.TextureCoords)*PSIn.TextureWeights.z;

farColor += tex2D(TextureSampler3, PSIn.TextureCoords)*PSIn.TextureWeights.w;

float4 nearColor;

float2 nearTextureCoords = PSIn.TextureCoords*3;

nearColor = tex2D(TextureSampler0, nearTextureCoords)*PSIn.TextureWeights.x;

nearColor += tex2D(TextureSampler1, nearTextureCoords)*PSIn.TextureWeights.y;

nearColor += tex2D(TextureSampler2, nearTextureCoords)*PSIn.TextureWeights.z;

nearColor += tex2D(TextureSampler3, nearTextureCoords)*PSIn.TextureWeights.w;

Output.Color = lerp(nearColor, farColor, blendFactor);

Output.Color *= lightingFactor;

return Output;

}

technique MultiTextured

{

pass Pass0

{

VertexShader = compile vs_1_1 MultiTexturedVS();

PixelShader = compile ps_2_0 MultiTexturedPS();

}

}

//------- Technique: Water --------

struct WVertexToPixel

{

float4 Position : POSITION;

float4 ReflectionMapSamplingPos : TEXCOORD1;

float2 BumpMapSamplingPos : TEXCOORD2;

float4 RefractionMapSamplingPos : TEXCOORD3;

float4 Position3D : TEXCOORD4;

};

struct WPixelToFrame

{

float4 Color : COLOR0;

};

WVertexToPixel WaterVS(float4 inPos : POSITION, float2 inTex: TEXCOORD)

{

WVertexToPixel Output = (WVertexToPixel)0;

float4x4 preViewProjection = mul (xView, xProjection);

float4x4 preWorldViewProjection = mul (xWorld, preViewProjection);

float4x4 preReflectionViewProjection = mul (xReflectionView, xProjection);

float4x4 preWorldReflectionViewProjection = mul (xWorld, preReflectionViewProjection);

Output.Position = mul(inPos, preWorldViewProjection);

Output.ReflectionMapSamplingPos = mul(inPos, preWorldReflectionViewProjection);

Output.BumpMapSamplingPos = inTex/xWaveLength;

Output.RefractionMapSamplingPos = mul(inPos, preWorldViewProjection);

Output.Position3D = mul(inPos, xWorld);

return Output;

}

WPixelToFrame WaterPS(WVertexToPixel PSIn)

{

WPixelToFrame Output = (WPixelToFrame)0;

float4 bumpColor = tex2D(WaterBumpMapSampler, PSIn.BumpMapSamplingPos);

float2 perturbation = xWaveHeight*(bumpColor.rg - 0.5f)*2.0f;

float2 ProjectedTexCoords;

ProjectedTexCoords.x = PSIn.ReflectionMapSamplingPos.x/PSIn.ReflectionMapSamplingPos.w/2.0f + 0.5f;

ProjectedTexCoords.y = -PSIn.ReflectionMapSamplingPos.y/PSIn.ReflectionMapSamplingPos.w/2.0f + 0.5f;

float2 perturbatedTexCoords = ProjectedTexCoords + perturbation;

float4 reflectiveColor = tex2D(ReflectionSampler, perturbatedTexCoords);

float2 ProjectedRefrTexCoords;

ProjectedRefrTexCoords.x = PSIn.RefractionMapSamplingPos.x/PSIn.RefractionMapSamplingPos.w/2.0f + 0.5f;

ProjectedRefrTexCoords.y = -PSIn.RefractionMapSamplingPos.y/PSIn.RefractionMapSamplingPos.w/2.0f + 0.5f;

float2 perturbatedRefrTexCoords = ProjectedRefrTexCoords + perturbation;

float4 refractiveColor = tex2D(RefractionSampler, perturbatedRefrTexCoords);

float3 eyeVector = normalize(xCamPos - PSIn.Position3D);

float3 normalVector = float3(0,1,0);

float fresnelTerm = dot(eyeVector, normalVector);

float4 combinedColor = lerp(reflectiveColor, refractiveColor, fresnelTerm);

float4 dullColor = float4(0.3f, 0.3f, 0.5f, 1.0f);

Output.Color = lerp(combinedColor, dullColor, 0.2f);

return Output;

}

technique Water

{

pass Pass0

{

VertexShader = compile vs_1_1 WaterVS();

PixelShader = compile ps_2_0 WaterPS();

}

}

发布时间:2009/12/17 15:42:18 阅读次数:8186

sampler TextureSampler

sampler TextureSampler